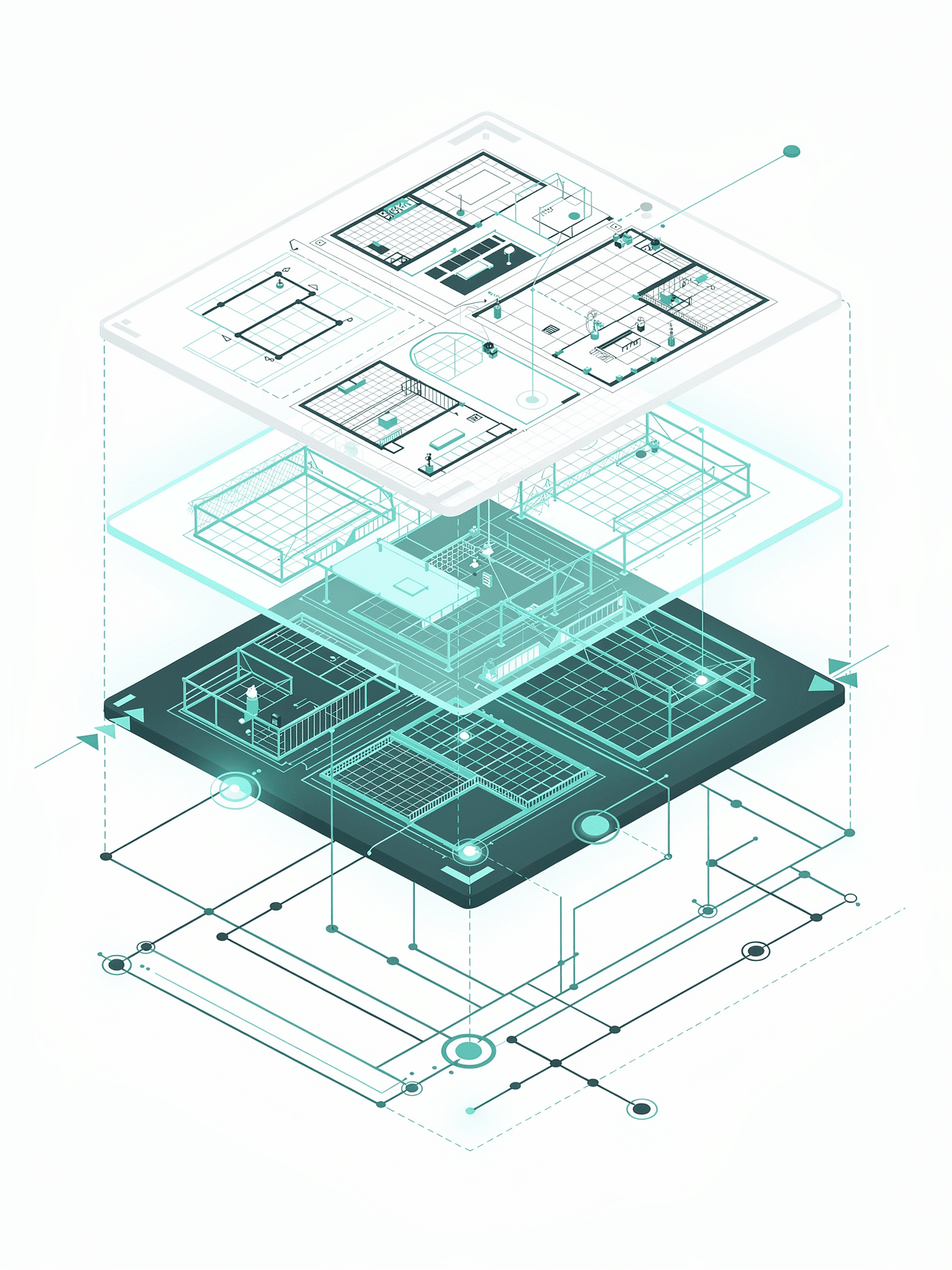

The Four-Layer Visibility Stack

A diagnostic model for identifying where in the generative search retrieval chain a brand is losing visibility — and directing strategy across every channel accordingly.

Not a content framework. A systems-level instrument.

How most GEO agencies operate

Most GEO agencies optimise individual content assets.

How Evolv operates

Evolv operates at the programme level. The Four-Layer Visibility Stack is a diagnostic model for identifying where in the generative search retrieval chain a brand is losing visibility — and directing strategy across every channel accordingly. It is not a content framework. It is a systems-level instrument for understanding AI search failure.

A visibility gap at any single layer can suppress performance across all four. Diagnosing the primary failure layer before deploying resources is the core function of an Evolv audit.

Overview: The Stack

L1 — Model

How the AI model understands, classifies, and associates your brand — independent of live retrieval

L3 — Distribution

The authority and citation signals that determine whether AI sources trust and reference your brand

The Four Layers — Evaluated in Sequence, Diagnosed as a System

L2 — Retrieval

Whether your content surfaces during real-time web retrieval — crawlability, indexation, and semantic density

L4 — Browser

How your brand appears in AI-native interfaces and agentic browsing environments at the point of decision

Layer 1: Model

The latent knowledge an AI model holds about your brand — its category membership, associations, product understanding, and perceived authority — formed during training, independent of what your website contains today.

Model-layer failure is the most commonly misdiagnosed visibility problem. Brands invest in content production and technical SEO while their core issue is that the model does not know who they are. No amount of retrieval-layer optimisation fixes a model that has incorrect or absent brand associations. The diagnostic question is simple: when an AI model is asked about the category your brand should own, does it surface you accurately?

Failure Signal

The model cannot accurately categorise the brand, confuses it with competitors, omits it entirely from category-level responses, or surfaces outdated or incorrect product information regardless of content quality.

Healthy State

The model consistently associates the brand with the correct category, product names, and competitive context. It surfaces the brand unprompted when category queries are made by target buyers.

Evolv Diagnosis

Systematic prompt testing across ChatGPT, Perplexity, Gemini, and Claude. Entity consistency audit across structured data, Wikipedia, Wikidata, and third-party citations. Identification of model-layer misattribution or absence.

Layer 2: Retrieval

Whether your content is technically and semantically accessible to the retrieval systems that feed live AI responses — including RAG pipelines, embeddings-based search, and standard crawler infrastructure.

Failure Signal

Content exists but is not being cited in real-time AI responses. Crawl data shows access, but semantic density is insufficient for embeddings retrieval. Structured data is absent or malformed.

Healthy State

Content is crawlable, semantically dense, entity-rich, and structured for RAG extraction. FAQ, HowTo, and DefinedTerm schema are implemented. Content answers discrete questions in extractable units.

Evolv Diagnosis

Technical crawl audit, schema implementation review, semantic density scoring, internal linking gap analysis, and log file analysis for AI bot access patterns including GPTBot and PerplexityBot.

Why retrieval alone isn't enough

Retrieval-layer issues are the most addressable in the short term and the most common focus of standard GEO agencies. But addressing retrieval in isolation — without model-layer or distribution-layer diagnosis — produces limited results.

A page that is perfectly structured for RAG extraction will still be ignored if the model does not associate the brand with the relevant category, or if distribution signals are insufficient for the AI to treat the source as authoritative.

Layer 3: Distribution

The external authority signals — third-party citations, digital PR coverage, review platform presence, and off-site entity reinforcement — that determine whether AI systems treat your brand as a credible, citable source.

Failure Signal

Brand appears in AI responses only when directly queried by name. Competitors are cited in category-level responses despite weaker on-site content. Off-site brand signals are thin or inconsistent across directories, publications, and review platforms.

Healthy State

Brand is cited across multiple authoritative third-party sources that AI models weight heavily. Review platform presence (G2, Capterra, TrustRadius for SaaS) reinforces category positioning. Digital PR produces citations that the model encounters at training and retrieval stages.

Evolv Diagnosis

Off-site entity audit, citation source mapping, competitor citation comparison on target queries, and briefing direction given to PR and content distribution partners within the unified GSO programme.

Distribution is where Evolv's directive role over other agency partners becomes most operationally significant. PR agencies are briefed on which publications and citation contexts are highest-value for model-layer and retrieval-layer reinforcement — not just for domain authority in the traditional SEO sense. The question is not "are we getting coverage?" but "is the coverage generating the citation signals that AI models weight when forming category associations?"

Layer 4: Browser

How your brand appears in the AI-native interfaces, agentic browsers, and side-panel AI experiences that are increasingly the first point of contact between a buyer and a category response.

Failure Signal

Brand is absent or poorly positioned in AI Overview responses, ChatGPT Browse results, Perplexity answer surfaces, and agentic browser research sessions. Competitor brands consistently appear first or most prominently.

Healthy State

Brand appears consistently across AI-native interfaces with accurate, positive framing. Landing pages are structured to convert traffic arriving from AI referrals. Attribution infrastructure captures AI-sourced sessions.

Evolv Diagnosis

Platform-by-platform visibility testing using Waikay topic scoring, share-of-answer tracking against named competitors, and attribution configuration for AI referral sources in GA4 and UTM frameworks.

The most visible — but not the root cause

Browser-layer performance is the most visible and measurable output of a GSO programme, and the most commonly used as the sole diagnostic instrument. Waikay topic scores and share-of-answer data are useful — but they describe symptoms.

The Stack's function is to trace browser-layer underperformance back to its root cause: a model that misclassifies you, content that fails retrieval, or distribution signals that are too thin for AI systems to weight your source.

How the Layers Interact

The Stack is not a linear pipeline. Failure at any layer can suppress performance at layers above and below it. Understanding these interaction effects is the diagnostic function that distinguishes a programme-level visibility audit from a content audit.

L1 Failure Masks L2 Investment — Strong content, invisible brand

A brand with retrieval-optimised, semantically dense content will still be absent from AI responses if the model layer holds incorrect or absent brand associations. The model cannot cite what it does not categorise correctly. This is the most common pattern in enterprise brands that have invested heavily in content but underinvested in entity clarity and off-site brand reinforcement.

L3 Failure Caps L2 Performance — Retrievable but not trusted

Content that is crawlable and semantically structured will still be deprioritised in AI responses if distribution signals are insufficient for the model to treat the source as authoritative. In competitive categories, thin off-site presence means well-structured pages lose citation share to competitors with stronger third-party reinforcement, regardless of on-site content quality.

L4 Data Misdirects Strategy — Treating symptoms as root cause

Brands that diagnose visibility gaps using only browser-layer metrics — topic scores, share-of-answer, AI Overview presence — will frequently misattribute the cause. Poor browser-layer scores are almost always downstream of L1, L2, or L3 failures. Deploying content production to fix a model-layer problem, or building links to fix a retrieval-layer structural issue, wastes resource and delays improvement.

** Compound Failure Pattern — Enterprise tech: the typical profile**

In Evolv's enterprise technology client engagements, the most common visibility gap pattern combines weak model-layer entity clarity (product naming inconsistency, category misclassification) with strong retrieval infrastructure but thin distribution signals in the specific publication and citation contexts that AI models weight for the relevant category. The result is high domain authority but low AI citation share.

How client engagements map to the Stack

Every Evolv engagement begins with a Four-Layer Visibility Audit: a structured diagnostic that produces a layer-by-layer gap map and a prioritised action framework. This output is the instrument used to direct the work of all agency partners — PR, PPC, content, and technical — under a unified GSO strategy.

Layer Audit

Systematic testing across all four layers. Prompt testing, entity audit, crawl review, citation mapping, and platform-by-platform llm audit scoring against named competitors.

Programme Brief

Strategic briefs issued to PR, content, PPC, and technical partners. Each brief is grounded in specific layer requirements, not generic channel objectives.

Gap Map

A prioritised layer-by-layer gap report identifying primary failure layers, interaction effects, and the competitor visibility patterns that define the benchmark.

Visibility Tracking

Ongoing topic-level scoring across AI platforms. Layer-specific KPIs reported alongside traditional SEO metrics to track programme progress over time.

Request a Four-Layer Visibility Audit

We diagnose which layer is responsible for your AI visibility gap — and direct your existing agency partners to address it. Structured output delivered within 10 working days.